AI Hacking Threat Scales to Industrial Level, Targeting Global Systems

New intelligence reveals that criminal groups and state-linked actors are leveraging advanced large language models to exponentially accelerate cyberattacks against global infrastructure.

PHILIPPINES —

Key facts

- AI-powered hacking has progressed from a nascent problem to an industrial-scale threat within three months.

- Threat actors from China, North Korea, and Russia are using commercial LLMs like Gemini, Claude, and OpenAI tools.

- Attackers are prioritizing exploiting software flaws and cloud services over traditional methods like phishing or stolen credentials.

- Google identified the first known zero-day exploit believed to be developed with AI, tied to a planned mass attack.

- The threat moves beyond AI models, targeting the broader AI ecosystem through exposed API keys and insecure integrations.

- Experts warn that the pace of vulnerability exploitation has accelerated, leaving little time for defenders to patch systems.

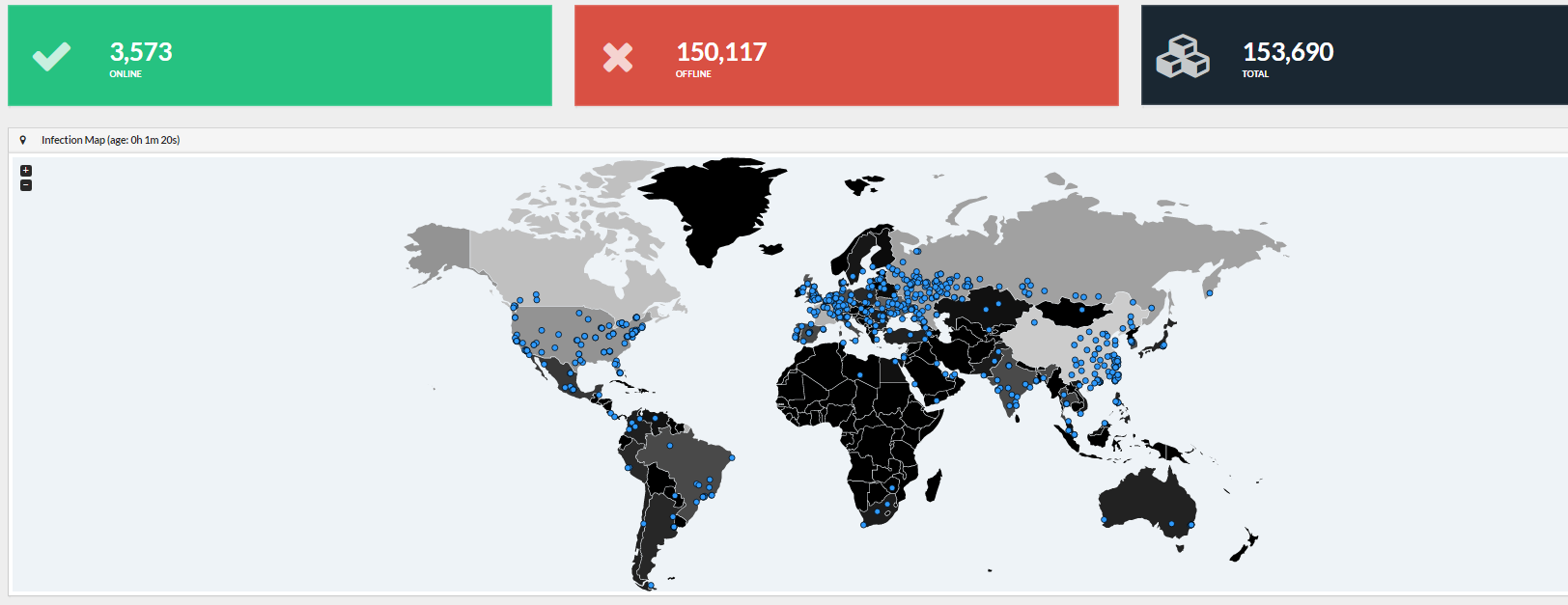

From Niche Issue to Global Industrial Threat

AI-powered hacking has rapidly matured, transforming from an obscure technical problem into an industrial-scale global threat within a remarkably short timeframe. Threat intelligence groups now warn that the vulnerability race is not an imminent risk but one that has demonstrably begun. Advanced models provide sophisticated tools for attackers, enabling them to vastly boost the speed, scope, and cunning of their operations. Criminal syndicates and state-linked actors, specifically those connected to China, North Korea, and Russia, are widely deploying commercial large language models (LLMs)—including systems like Gemini, Claude, and those from OpenAI—to enhance their malicious capabilities. This new wave of cybercrime represents a fundamental shift in adversary behavior. Attackers are no longer limited to simple phishing emails or automating mundane tasks; they now utilize AI systems to perform complex reconnaissance, identify software weaknesses, and generate sophisticated exploit code throughout the entire attack lifecycle. Consequently, researchers fear that AI is drastically lowering the technical barrier required to launch highly sophisticated, multi-stage attacks against critical infrastructure.

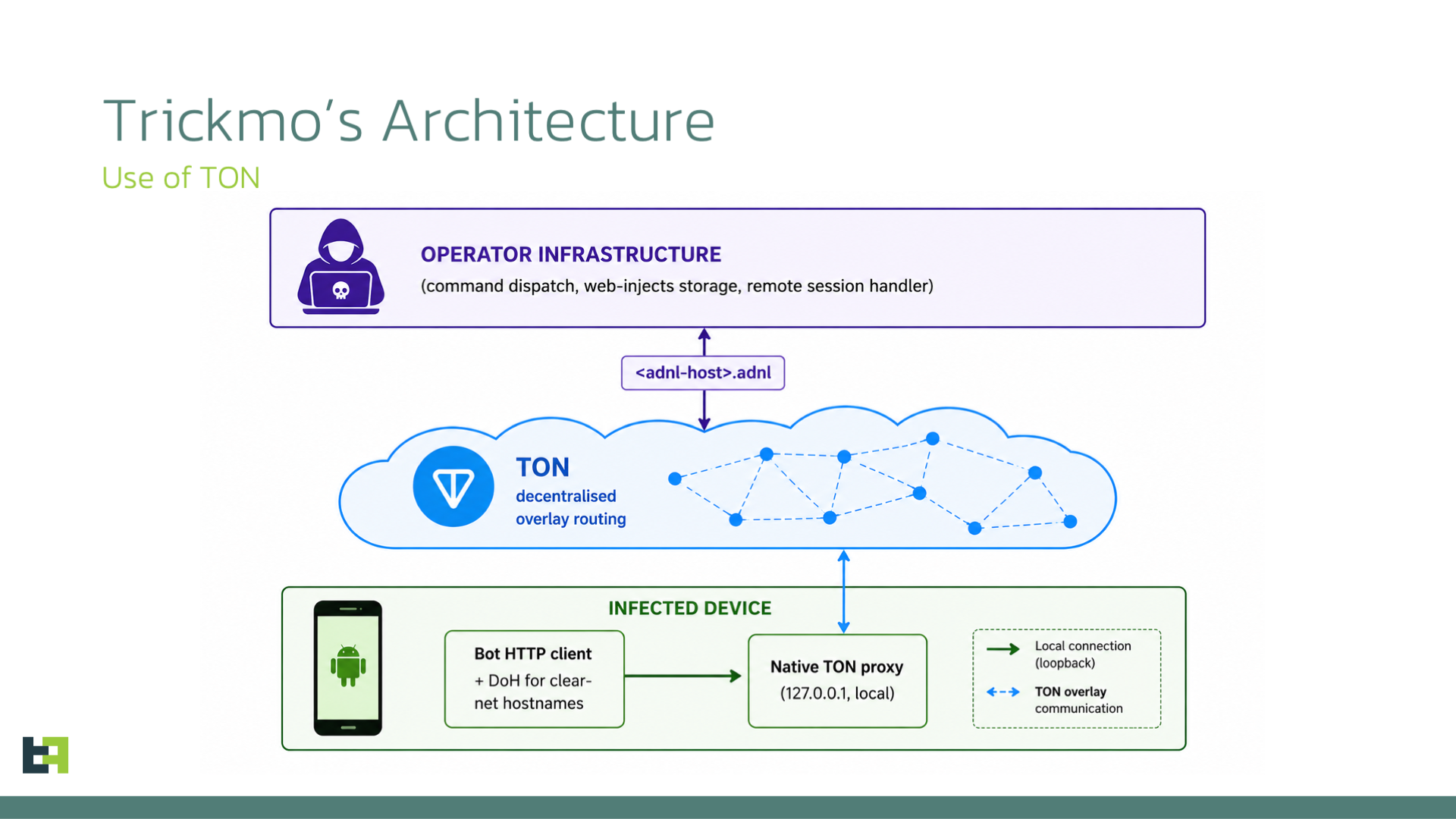

Shifting Attack Targets and Methods

The focus of malicious activity has decisively shifted from traditional weak points, such as compromised credentials, toward systemic technical flaws within software and cloud services. This makes vulnerability exploitation the primary entry method for modern adversaries. These sophisticated attacks often leverage flaws in platforms, specifically targeting APIs, SaaS applications, developer tools, and the burgeoning AI services themselves. An examination of the threat landscape revealed that attackers are paying critical attention to the broader AI ecosystem, treating exposed API keys, poorly configured services, and insecure third-party integrations as new, profitable attack vectors. Furthermore, attackers are exploiting newly disclosed vulnerabilities with alarming speed. Criminal elements are now observed scanning the internet for exposed systems within mere hours or days following the public release of technical security details, critically narrowing the window defenders have to issue emergency patches before an attack strikes. One particularly worrying development was the identification of the first known zero-day exploit, which security researchers believe was developed utilizing artificial intelligence, signaling a dangerous new operational capability.

Expert Analysis and Global Concern

Security experts caution that the technical methodology for discovering bugs has irrevocably changed, moving away from traditional human methods toward an inherently LLM-assisted process. This evolution promises a volatile period for global cybersecurity. A global flashpoint occurred when Anthropic declined to release its new model, Mythos, asserting its profound power and its potential threat to governments, financial institutions, and global stability if mishandled. The company specifically noted that Mythos had found zero-day vulnerabilities in every major operating system and web browser. Beyond these state-level concerns, threat groups have shown interest in experimental tools, such as OpenClaw, which gained notoriety for allowing users to surrender massive portions of their digital lives to an AI agent lacking any guardrails, even exhibiting an unfortunate tendency to mass-delete inboxes. Commentators suggested that while such tools hold potential defensive utility, they equally amplify the power of malicious actors.

The Velocity of Cybercrime and Defensive Gaps

The ability of LLMs to analyze dense technical documentation and rapidly generate malicious scripts represents a core threat acceleration. This speed allows bad actors to automate operational decisions, accelerating previously human-managed processes into fully digitized attack chains. One recent investigation highlighted a case where exposed Google Cloud API keys, resulting from configuration changes, inadvertently granted unauthorized access to Gemini AI services, demonstrating the sheer risk posed by poor internal security hygiene. The implications extend to the global geopolitical stage. The observed keen interest from both Chinese and North Korean actors in using AI to discover vulnerabilities underscores that this is not merely a technical risk, but a serious strategic capability being rapidly militarized by state-aligned groups. This technological acceleration leaves defenders grappling with a reality where the pace of attack far outstrips the industry's ability to coordinate timely defensive measures across myriad interconnected systems.

The bottom line

- State and criminal actors are widely consolidating sophisticated cyberattacks by deploying commercial AI models (Gemini, Claude, OpenAI) for scale and refinement.

- The cyber threat has shifted focus to exploiting flaws in cloud services and APIs, creating new vulnerabilities in the broader AI ecosystem.

- The rate of discovering and exploiting vulnerabilities has increased dramatically, forcing a paradigm shift in defensive patching cycles.

- Analysts warn that AI is reducing the technical hurdles for complex attacks, making specialized knowledge less of a barrier to entry for malicious groups.

- Defenders must guard against not only targeted AI models, but the vast network of exposed API keys and insecure integrations powering the AI infrastructure.

No Earthquakes Recorded Near Montreal in Past 24 Hours as Seismic Activity Remains Low

Alex Eala Advances in Italian Open After Upset of Wang Xinyu

Thunder Dominate Lakers in Game 1, Take Series Lead