Meta Uses AI Bone Analysis to Detect Underage Users, Denies Facial Recognition

The social media giant expands age verification with visual AI scanning profiles for height and bone structure, while facing a $375 million penalty in New Mexico over child safety failures.

THAILAND —

Key facts

- Meta deploys AI visual analysis to scan photos and videos for clues like height and bone structure to estimate user age.

- The technology is not facial recognition and does not identify specific individuals, Meta states.

- Accounts suspected of belonging to users under 13 will be deactivated; owners must verify age to avoid deletion.

- Meta expands Teen Account protections to 27 EU countries and Brazil on Instagram, and to Facebook in the US.

- A New Mexico jury found Meta violated state law by misleading customers about platform safety, ordering $375 million in damages.

- Meta advocates for age verification at the app store and operating system level, a policy gaining traction in US states.

- achieves higher accuracy and faster resolutions than human review, Meta claims.

AI Scans Profiles for Age Clues

Meta is deploying artificial intelligence to scan the entire profiles of its users — including photos, videos, posts, comments, and bios — to detect those who may be under 13 years old. The system analyzes visual cues such as height and bone structure, as well as contextual signals like birthday celebrations or mentions of school grades, to estimate a user's age. The company emphasized in a blog post that the technology is not facial recognition. “We want to be clear: this is not facial recognition,” Meta stated, adding that the AI does not identify the specific person in the image. Instead, it looks at general themes and visual cues to estimate a person’s general age. If the AI determines an account likely belongs to someone underage, the account is deactivated. The account holder must then provide proof of age through Meta’s age verification process to prevent deletion.

Expanding Teen Account Protections Globally

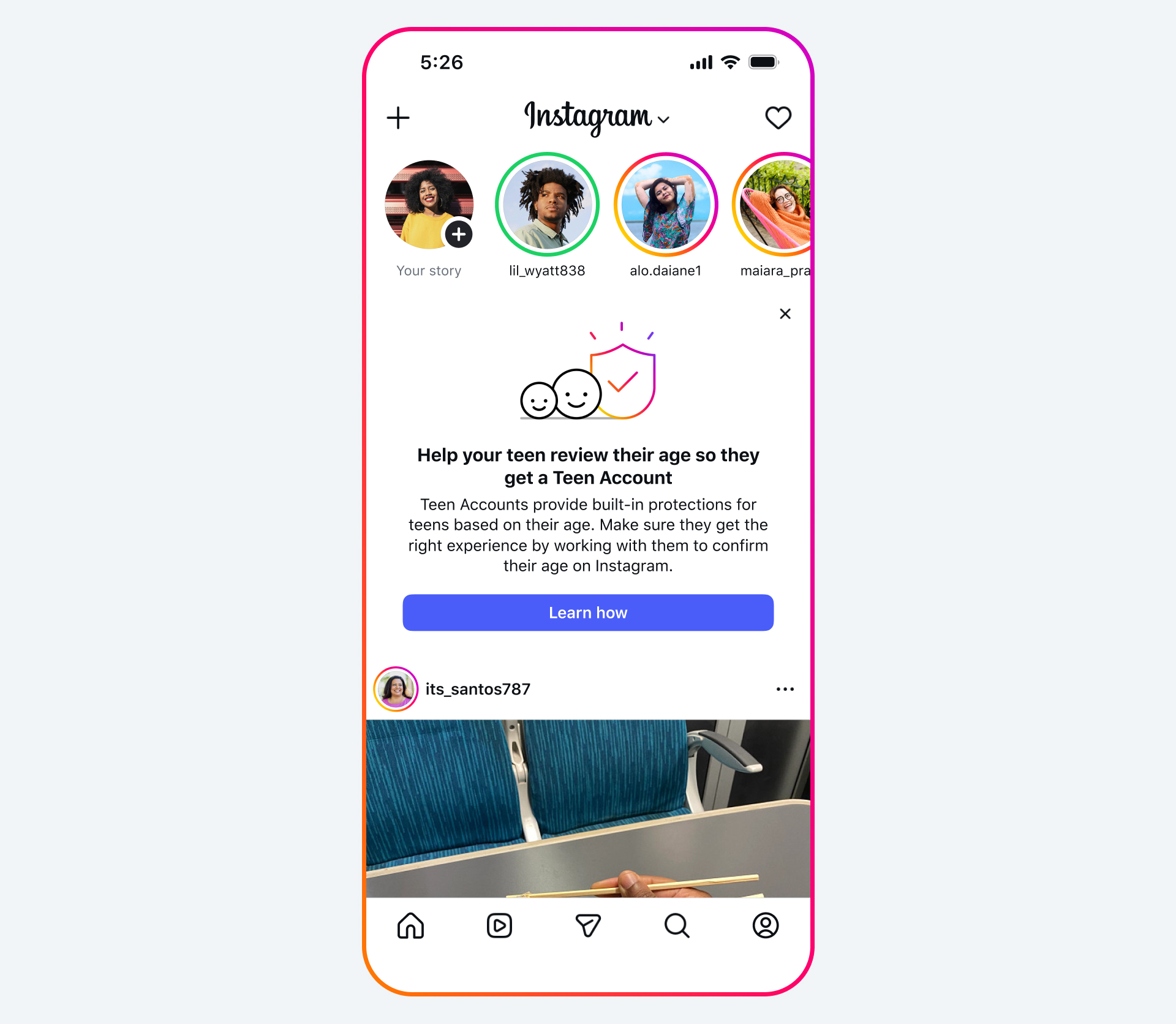

Since 2024, Meta has enrolled hundreds of millions of teens on Instagram, Facebook, and Messenger into Teen Accounts, which come with built-in protections such as stricter content controls, blocking messages from strangers, and preventing users under 16 from livestreaming. The company uses technology to proactively find accounts it suspects belong to teens, even if they list an adult birthday, and place them in these protections. This technology was initially launched on Instagram in the US, Australia, Canada, and the UK, placing millions of accounts into age-appropriate settings. Meta is now expanding it to 27 countries in the EU and Brazil for Instagram, and to Facebook in the US for the first time, followed by the UK and EU in June. The company aims to expand globally throughout the year. Parents in the US will begin receiving notifications this month on Facebook and Instagram with information on how to check and confirm their teens’ ages, along with tips for discussing the importance of providing the correct age online.

New Mexico Jury Orders $375 Million Penalty

The announcement comes days after a New Mexico jury found that Meta violated state law by misleading customers about the safety of its platforms and failing to protect children from child predators. The jury ordered Meta to pay $375 million in damages, and the company may be required to implement changes that it has already threatened to leave the state over. The legal loss underscores the high stakes for Meta as it faces increasing scrutiny over its handling of underage users. The company has long advocated for age verification at the app store and operating system level, an approach that is gaining traction in Congress and in states like California and Colorado.

AI Review Outperforms Human Teams

In addition to visual analysis, Meta is supplementing its human review teams with AI models that apply consistent evaluation criteria to every report of underage accounts. The company says that in testing, this AI-driven review delivers higher accuracy and faster resolutions than human review alone, ensuring that accounts are addressed with more speed and reliability. Meta is also simplifying its reporting flows, making it easier for users to report underage accounts both in the app and on the Help Center. The company is strengthening its circumvention measures to prevent new accounts from users it suspects are underage. While many of these AI improvements are available worldwide, certain advanced features like visual analysis are currently available only in select countries as Meta works toward a broader rollout.

Industry-Wide Challenge of Age Assurance

Meta acknowledges that knowing someone’s age online is a complex, industry-wide challenge. The company has invested heavily in age assurance technology, including the use of sophisticated AI to find people it believes are teens, even if they list an adult birthday. The company requires everyone to be at least 13 to use Instagram or Facebook, and for over a decade has built tools, features, and resources to help teens have safe, age-appropriate experiences. This includes Teen Accounts on Instagram, Facebook, and Messenger with built-in protections that limit who can contact teens and the content they see. Meta continues to advocate for age verification at the app store and operating system level, an approach that is gaining traction in Congress and some states, including California and Colorado.

Outlook: Balancing Safety and Privacy

Meta’s use of AI to analyze visual cues such as bone structure raises questions about privacy and the boundaries of automated surveillance. The company’s insistence that the technology is not facial recognition may not fully assuage critics who worry about the potential for misuse or mission creep. As Meta expands these tools globally, it will need to navigate varying regulatory landscapes and public expectations around data privacy. The $375 million penalty from New Mexico serves as a warning that failure to protect young users can carry significant financial and reputational costs. The company’s push for app-store-level age verification could shift responsibility to platform operators, but such policies remain controversial and are still being debated in legislatures. For now, Meta is betting that AI-driven analysis can help it meet its safety obligations without overstepping into invasive surveillance.

The bottom line

- Meta is using AI to scan entire profiles for visual and contextual clues to detect underage users, but says it is not facial recognition.

- Accounts suspected of belonging to users under 13 are deactivated; owners must verify age to prevent deletion.

- Teen Account protections are expanding to 27 EU countries, Brazil, and Facebook in the US, with global rollout planned.

- A New Mexico jury ordered Meta to pay $375 million for misleading customers about child safety on its platforms.

- Meta’s claims higher accuracy and faster resolutions than human review.

- Meta advocates for age verification at the app store and operating system level, a policy gaining support in US states.

หวยพัฒนา: ประเด็นสำคัญ

Inter Milan on brink of Serie A title as Dimarco breaks assist record