Social Media Firms Deny Platforms Are Addictive to Children

Executives from Meta, TikTok, and Snapchat faced scrutiny over algorithms and online safety from Irish lawmakers.

IRELAND —

Key facts

- Social media companies deny their platforms are addictive.

- Meta, TikTok, and Snapchat executives appeared before the Oireachtas Committee on Children and Equality.

- Richard Collard of TikTok stated the company does not agree with the term 'addictive'.

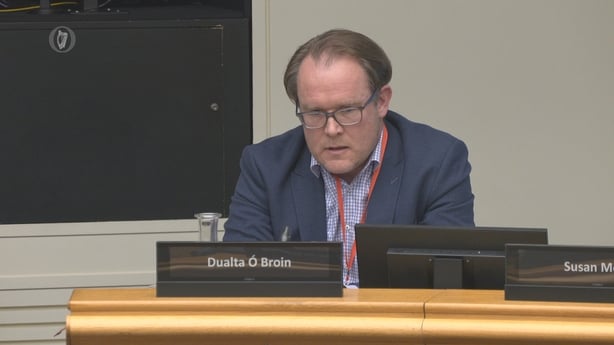

- Dualta Ó Broin of Meta discussed content policies related to suicide and eating disorders.

- Coimisiún na Meán launched investigations into Meta's recommender systems on Facebook and Instagram.

- Meta claims to have fundamentally changed how teenagers use Instagram and Facebook.

- Irish lawmakers questioned executives about protecting children online.

Tech Giants Face Lawmakers Over Child Safety

Executives from major social media platforms, including Meta, TikTok, and Snapchat, appeared before the Oireachtas Committee on Children and Equality to address concerns regarding the online safety of young users. The high-profile session saw lawmakers grill company representatives on issues ranging from harmful algorithms to the presence of inappropriate content on their services. This parliamentary scrutiny arrives at a critical juncture, with Ireland's media regulator, Coimisiún na Meán, having recently initiated investigations into Meta's recommender systems. The regulator's focus on how Facebook and Instagram promote content underscores the growing unease among authorities about the potential impact of these platforms on vulnerable users. The companies, while asserting their commitment to child well-being, faced direct questions about the design and impact of their platforms. The proceedings highlighted the ongoing tension between technological innovation and the imperative to safeguard children in the digital realm.

Denials of Platform Addictiveness

When directly questioned about the addictive nature of their services, representatives from the social media giants offered firm denials. Richard Collard, TikTok's Minor Safety Public Policy Lead, stated, "We wouldn't agree with the term addictive but that doesn't mean we don't take the wellbeing of children incredibly seriously." He elaborated that TikTok's recommender algorithm is designed to ensure users are presented with content relevant to their interests on a platform where 100 million pieces of content are uploaded daily. This stance directly challenges widespread concerns that the very design of these platforms, driven by sophisticated algorithms, can foster compulsive usage patterns among young people. The companies maintain that their technology is primarily focused on user engagement and content relevance, rather than on creating dependency. The committee's inquiry sought to understand the mechanisms behind content recommendation and whether these systems inadvertently expose children to harmful material. The executives' responses aimed to reassure lawmakers that robust measures are in place to mitigate such risks.

Content Moderation and 'Teen Accounts'

Dualta Ó Broin, Director of Public Policy for Meta in Ireland, addressed specific concerns about content related to suicide and eating disorders. Responding to Fine Gael TD Grace Boland's question about verification processes, Mr. Ó Broin acknowledged that "we can't obviously say that absolutely no content will slip through." However, he emphasized that Meta has "very strict enforcement in relation to proactive enforcement and reporting," and that "that type of content is not allowed" to be recommended. Mr. Ó Broin also informed the committee that Meta has "fundamentally changed" the way teenagers use its services, including Instagram and Facebook. He stated that the company is "constantly" innovating to address risks to underage users. This includes the introduction of "teen accounts" that limit the types of content appearing in a user's feed, along with additional parental controls for further material restriction. Despite these assurances, the recent investigations by Coimisiún na Meán into Meta's recommender systems suggest that regulators remain unconvinced about the efficacy of current safeguards. The focus on these systems highlights a key area of contention: how platforms curate and push content to young audiences.

Regulatory Scrutiny Intensifies

The Oireachtas committee hearing occurred just days after Coimisiún na Meán launched two new investigations into Meta's platforms, Facebook and Instagram. The regulator's focus is specifically on the recommender systems employed by the social media giant, which are central to how users discover and consume content. These investigations signal a significant escalation in regulatory oversight in Ireland concerning the impact of social media on children. The move by Coimisiún na Meán indicates a desire to move beyond self-regulation by tech companies and to impose external scrutiny on the algorithms that shape online experiences. The timing of these probes, coinciding with the parliamentary committee's questioning, underscores the urgency and breadth of concerns surrounding the digital environment for young people. Lawmakers and regulators alike are seeking concrete assurances and demonstrable changes in platform design and policy.

The Algorithm's Role in User Experience

At the heart of the debate lies the recommender algorithm, a sophisticated piece of technology that dictates what content users see. For platforms like TikTok, where millions of videos are uploaded daily, the algorithm is crucial for surfacing relevant material. TikTok's representative described it as a tool to ensure users encounter content aligned with their interests. However, critics and lawmakers often point to these same algorithms as potential drivers of addictive behavior and exposure to harmful material. The concern is that algorithms, optimized for engagement, may prioritize sensational or extreme content that keeps users hooked, regardless of its suitability for young audiences. Meta's assertion that it has fundamentally altered teen usage patterns suggests an acknowledgment of these risks. Yet, the ongoing regulatory investigations imply that the effectiveness of these changes, and the underlying algorithmic principles, are still subject to intense scrutiny and doubt.

Future of Online Child Protection

The interactions between social media executives and Irish lawmakers highlight a global challenge: how to balance the benefits of online connectivity with the need to protect children. The denials of addictiveness, coupled with assurances of safety measures, represent the industry's current defense against mounting criticism and regulatory pressure. As Coimisiún na Meán continues its investigations, the outcomes could set important precedents for how social media platforms operate within Ireland and potentially influence regulatory approaches in other jurisdictions. The focus on recommender systems suggests a potential shift towards scrutinizing the core technology that drives user experience. Ultimately, the efficacy of measures like 'teen accounts' and parental controls, alongside the fundamental design of algorithms, will determine whether social media platforms can truly provide a safe and healthy environment for younger users. The coming months will be crucial in determining the next steps in this complex and evolving landscape.

The bottom line

- Social media companies, including Meta, TikTok, and Snapchat, have denied their platforms are addictive to children.

- Executives faced questioning from Irish lawmakers on issues of harmful algorithms and inappropriate content.

- Meta claims to have significantly altered how teenagers use Instagram and Facebook, introducing 'teen accounts' and enhanced parental controls.

- Ireland's media regulator, Coimisiún na Meán, has launched investigations into Meta's recommender systems on Facebook and Instagram.

- The role of algorithms in content recommendation and potential for fostering compulsive usage remains a key point of contention.

- The regulatory scrutiny and parliamentary hearings signal an intensified focus on protecting children online.

Jet Fuel Shortage Looms as Middle East Conflict Disrupts Global Supply

Reality Star Jake Hall Dies Aged 35 in Mallorca

Chang Bingyu Earns £172,000 Despite World Championship Snooker Absence